Receive new posts as email.

| Sun | Mon | Tues | Wed | Thurs | Fri | Sat |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | 6 | |

| 7 | 8 | 9 | 10 | 11 | 12 | 13 |

| 14 | 15 | 16 | 17 | 18 | 19 | 20 |

| 21 | 22 | 23 | 24 | 25 | 26 | 27 |

| 28 | 29 | 30 |

This site operates as an independent editorial operation. Advertising, sponsorships, and other non-editorial materials represent the opinions and messages of their respective origins, and not of the site operator. Part of the FM Tech advertising network.

Entire site and all contents except otherwise noted © Copyright 2001-2010 by Glenn Fleishman. Some images ©2006 Jupiterimages Corporation. All rights reserved. Please contact us for reprint rights. Linking is, of course, free and encouraged.

Powered by

Movable Type

The Bluetooth SIG will create a version that runs over Wi-Fi: Bluetooth comprises applications and radio standards. The applications include standard profiles that developers use to add features like keyboard and input device access, file transfer, and dial-up networking. The Bluetooth SIG has a long-range plan to keep Bluetooth relevant by essentially adding more radio technologies underneath, not just the 1 Mbps version found in Bluetooth 1.x and the 3 Mbps version in the Enhanced Data Rate (EDR) part of 2.x+EDR.

Ultrawideband (UWB) was one of the preferred newer radio standards, something they decided on supporting in March 2006, because UWB seemed to be near term at that point, and was part of the original migration path for personal area networking in the IEEE 802.16 group that Bluetooth has some coordination with. (UWB was to be the radio standard for 802.16.3a until the group disbanded over friction caused by a now-dropped original flavor of UWB from what is now Motorola spin-off Freescale.) UWB is low-power and low-range, making it ideal.

But it's hardly on the market yet and is way too expensive. This pushes back Bluetooth over UWB in handsets to something like 2009. TechWorld notes that UWB vendors say that UWB handsets will be on the market (in Asia) within six months. Of course, UWB chipmakers and manufacturers have been telling me since 2006 that UWB products will be shipping in a few months. They weren't lying; complications ensued. I accept that. But I'm now Missouri as regards UWB in shipping hardware.

As a result, TechWorld reports, the SIG's chair, ironically a Motorola employee, said that they would focus on building Bluetooth over Wi-Fi. Details aren't available, and one UWB vendor says that Wi-Fi and Bluetooth are incompatible due to security models.

Posted by Glenn Fleishman at 4:00 PM | Permanent Link | Categories: Bluetooth, Hardware, UWB

Boingo picks up seven airport Wi-Fi networks: Boingo bought Concourse Communications last year to conserve revenue, improve their bargaining power with international hotspot network operators, and expand their retail brand. They've rolled out a few more airports in the interim, but this Sprint deal lets them pick up seven with one blow. (I wrote a lengthy article on the state of Boingo on 17 Oct 2007.)

Boingo picks up seven airport Wi-Fi networks: Boingo bought Concourse Communications last year to conserve revenue, improve their bargaining power with international hotspot network operators, and expand their retail brand. They've rolled out a few more airports in the interim, but this Sprint deal lets them pick up seven with one blow. (I wrote a lengthy article on the state of Boingo on 17 Oct 2007.)

The deal allows Sprint Wi-Fi subscribers to retain inclusive access to these airports and gain the 16 that Boingo operates, which includes the big three around New York City (JFK, Newark, and LaGuardia) and Chicago's two airports.

This acquisition puts Boingo in a position where they will surely be able to work with every airline that offers in-flight Internet access, as those airlines will want to provide some form of seamless access from airport to airport, too.

Boingo charges $22 per month for unlimited access to U.S. hotspots and $39 per month for unlimited access to its worldwide network. A single session costs $8.

The airports are Houston William P Hobby (HOU), Houston George Bush Intercontinental (IAH), Memphis International (MEM), Milwaukee General Mitchell International (MKE), Oakland International (OAK), Louisville International-Standifer Field (SDF), and Salt Lake City International (SLC). These are mostly solid second-tier airports, with the exception of Houston George Bush (No. 4 in the U.S. in 2006 by passenger traffic) and Salt Lake City (No. 23).

Posted by Glenn Fleishman at 1:05 PM | Permanent Link | Categories: Aggregators, Air Travel, Hot Spot

Buffalo Technology was enjoined starting Oct. 1 from selling 802.11a and g gear in the U.S.: The Australian technology research agency CSIRO has a broad patent in the U.S. that's being widely fought. A Texas court, in a jurisdiction which patentholders attempt to get their cases remanded to, found Buffalo infringed CSIRO's rights. Buffalo has appealed. Buffalo's posting on their wireless products page notes, "Recently, Microsoft, 3COM Corporation, SMC Networks, Accton Technology Corporation, Intel, Atheros Communications, Belkin International, Dell, Hewlett-Packard, Nortel Networks, Nvidia Corporation, Oracle Corporation, SAP AG, Yahoo, Nokia, and the Consumer Electronics Association filed briefs in support of Buffalo's position that injunctive relief is inappropriate in this case." Buffalo can sell existing inventories and has permission to replace products under warranty.

Although Buffalo mentions 802.11a and g, Draft N products are apparently under the import ban, too, perhaps because they incorporate 802.11a and g.

The Register notes that the Microsoft-led group that includes 3Com, SMC Networks, and Accton are pushing the eBay v MercExchange argument, which prevents injunctions in most cases in which a patentholder isn't actively involved in making a product that uses the patent.

CSIRO's actions don't threaten 802.11n so much as impose a fee on it. If CSIRO can prevail, then every piece of 802.11a, g, and n (but not b) gear would have a small licensing fee attached to it. This would increase the cost of products by a tiny amount. Companies would also potentially either be liable (if they lost a lawsuit) or able (if they negotiated) to pay back fees in that case, too.

If CSIRO can't prevail in U.S. courts, then it's business as usual. [link via The Register]

Posted by Glenn Fleishman at 1:45 PM | Permanent Link | Categories: Legal

After more than a year of providing hints at their capability, Eye-Fi has released their flagship product: a 2 GB Secure Digital flash card with built-in Wi-Fi for $100: The Eye-Fi Card connects over a Wi-Fi network using its own onboard processor to transfer images from the card to a computer or upload the photos to Eye-Fi's servers for further distribution. The camera has to be powered on within range of a Wi-Fi network; there's no other intervention needed.

The company has partnered with 17 major photo-sharing, photo-finishing, and social-networking services and sites to enable direct transfer to one or more of those services when pictures are uploaded, based on your choices.

The Eye-Fi is not a generic Wi-Fi adapter: that is, it doesn't magically add Wi-Fi capabilities to a digital camera. Rather, it's a separate computer that happens to live within an SD card and can access the same stored data that the digital camera can. I expect to review the unit in the next week or two.

In an interview with Jef Holove, Eye-Fi's chief executive, he explained that Eye-Fi had honed in on a very simple offering, with the potential to become more complicated later as the market dictated. He describes Eye-Fi as "a wireless memory card that lets you upload your photos," a concise summary.

The Eye-Fi's intent is to allow zero-effort uploading of photographs taken on a digital camera. I haven't seen anything close to this amount of simplicity, including in the consumer cameras that have Wi-Fi built-in from Nikon, Canon, and Kodak. Those cameras generally don't allow full-resolution Internet transfers of photos, and lock you into specific upload services, such as Kodak Gallery (renamed for the third time in a handful of years). Eye-Fi wanted to provide full-resolution uploads, no preferred service, and eliminate the effort in initiating or managing the transfer.

The Eye-Fi's intent is to allow zero-effort uploading of photographs taken on a digital camera. I haven't seen anything close to this amount of simplicity, including in the consumer cameras that have Wi-Fi built-in from Nikon, Canon, and Kodak. Those cameras generally don't allow full-resolution Internet transfers of photos, and lock you into specific upload services, such as Kodak Gallery (renamed for the third time in a handful of years). Eye-Fi wanted to provide full-resolution uploads, no preferred service, and eliminate the effort in initiating or managing the transfer.

An Eye-Fi needs to be set up before it's used in a camera. The device comes with a small USB dock, and software for Windows and Mac OS X that can configure internal settings in the card. The software mostly exists to connect you with Eye-Fi's Web site, where you create an account, enter Wi-Fi network settings (including passwords), and enter or sign up for any of the 17 services you may use or belong to. Various settings are then installed on the Eye-Fi and it's ready to go.

Whenever you're within range of any of the networks you've configured, the Eye-Fi transfers any pictures you've taken since the last transfer. The camera isn't involved. Holove said that there are three modes that the card can work in for transfers: transfer to the host computer; transfer to Eye-Fi's servers directly; or transfer to Eye-Fi's servers and then download to the host computer.

The last option sounds a little confusing: why download photos again rather than transfer them over the local network? Holove explained that it would double the battery usage to transfer the images twice, so they opted to retrieve the images after upload rather than reduce the camera's charge.

Holove said that they estimate the card consumes about 5 to 10 percent more battery than a camera would use otherwise; they found their beta testers hardly noticed the power consumption due to the increased capacity of modern batteries and more energy-efficient camera designs. The Wi-Fi component, an Atheros AR6001, uses very little energy while idle.

If you choose to upload photos, Eye-Fi's servers automatically transfer the photos to the service you selected. You can register at all the services you regularly use, and then choose which single service gets the uploaded images when you're between uploading sessions. If individual photos size or resolution exceeds the maximum allowed by a given service, Eye-Fi's system resizes the image just for them. (I'd prefer Eye-Fi uploaded to one or more services at once, but that's not in line with their approach, which is "keep it simple at this stage.")

There's no option to downsample photos on upload to reduce the upload time, however. Holove said that in this first iteration, they wanted to appeal to what they found was a common sentiment among photographers they're aiming at: the desire to upload full-resolution images. Holove said "As storage for these [photo-sharing] companies becomes cheaper and cheaper and cheaper, it becomes more affordable for these companies to store higher res images."

The Eye-Fi is shipping initially as a 2 GB SD card because higher capacities require the use of SDHC (SD High Capacity), which isn't supported on many older and less expensive cameras. SDHC is required for 4 GB and higher memory cards, and Holove said that the firm "wanted to launch a product that would work with all the SD cameras out there."

Initial partners are dotPhoto, Facebook, Flickr, Fotki, Kodak Gallery, Phanfare, Photobucket, Picasa Web Albums, Sharpcast and Gallery, Shutterfly, SmugMug, Snapfish, TypePad, VOX, Wal-Mart, and Webshots.

Posted by Glenn Fleishman at 11:01 PM | Permanent Link | Categories: Gadgets, Photography

Upping the ante for mobile devices, Atheros offers a series of chips that consume almost no standby power: In recent years, every new chip design for mobile devices focuses on three factors: integration, or the number of features backed into one chip to reduce the cost, form factor, and power use of multiple chips; size; and standby/idle power. That last can be the killer. You can have tiny chips, but if they pull several percentage points of the in-use power to maintain status on a network or scan for networks, it's hard to get out of the gate.

With less power consumed, the longer lived a mobile device is, and the more likely a manufacturer is to design high-bandwidth uses. Atheros's AR6002 series (single-band g, dual-band a/g) consumers what the company calls "near-zero standby power," and 70 percent less than competing offerings in active mode. Their two examples are that the chip could be used on a standard phone to provide 100 hours of VoIP or download 200 GB of data.

Chips will ship in quantity in the first quarter of 2008.

Posted by Glenn Fleishman at 3:21 PM | Permanent Link | Categories: Chips

![]() BelAir wasn't gauche enough to mention this fact, but you just need to look at old announcements: BelAir put out a press release today noting that service provider RedMoon had opted to replace their network with BelAir's equipment--170 nodes that will be deployed over four square miles by the end of this year. Addison features a number of company headquarters, including, the release notes, "Pizza Hut, Mary Kay Cosmetics, CompUSA, and Palm Harbor Homes."

BelAir wasn't gauche enough to mention this fact, but you just need to look at old announcements: BelAir put out a press release today noting that service provider RedMoon had opted to replace their network with BelAir's equipment--170 nodes that will be deployed over four square miles by the end of this year. Addison features a number of company headquarters, including, the release notes, "Pizza Hut, Mary Kay Cosmetics, CompUSA, and Palm Harbor Homes."

Just over two years ago, RedMoon put in 80 Tropos nodes.

RedMoon isn't big on announcements; their Web site's Newsroom page announces "text to come"; the Web site copyright date is 2006. No other deployments are noted. The firm was purchased by NewMarket Technology, a shareholder, earlier this year. NewMarket is "on track" to establish "$10 Million [sic] in profitable annual revenue from its broadband subsidiary...", a June press release notes. This release also lists three projects in Texas: Addison, Temple, and a Chevron project in Burleson.

Posted by Glenn Fleishman at 10:30 AM | Permanent Link | Categories: Financial, Metro-Scale Networks, Municipal

![]() AT&T backs off from St. Louis, Mo., Wi-Fi network, but let's not be too hard on them: The network hit a snag that cropped up after an 18-month negotiation between the city and the company: the 51,000 street lights that were the first candidates for mounting Wi-Fi receivers receive no power during the day, and are bank-switched, meaning that power is centrally switch on and off for large numbers of poles at once. Utility poles were looked at, but in St. Louis, these are located primarily in the wrong places, such as alleys. Other locations proved to be equally problematic, as well as using batteries. Street lamps could be rewired, but it would cost an estimated $28m.

AT&T backs off from St. Louis, Mo., Wi-Fi network, but let's not be too hard on them: The network hit a snag that cropped up after an 18-month negotiation between the city and the company: the 51,000 street lights that were the first candidates for mounting Wi-Fi receivers receive no power during the day, and are bank-switched, meaning that power is centrally switch on and off for large numbers of poles at once. Utility poles were looked at, but in St. Louis, these are located primarily in the wrong places, such as alleys. Other locations proved to be equally problematic, as well as using batteries. Street lamps could be rewired, but it would cost an estimated $28m.

I'm a bit surprised that there wasn't a survey of poles and lights as part of the process before AT&T bid, but the bidding process has varied city by city. In some cases, exhaustive inventories of city and utility facilities were assembled and enumerated; in others, that was all secondary to securing a basic deal.

AT&T will build out a square mile of service downtown for now.

Posted by Glenn Fleishman at 4:43 PM | Permanent Link | Categories: Metro-Scale Networks, Municipal

T-Mobile says that it will offer free use of its hotspots across parts of Southern California: The company has made this rather classy offer in the past, too, during other disasters. Service is free across six counties: Los Angeles, San Diego, Orange, San Bernardino, Ventura, and Santa Barbara. This includes airports, Starbucks, FedEx Kinko's, Borders, Hyatt Hotels, and Red Roof Inns, among other locations. Service will be free through Oct. 31 and encompasses 1,200 locations.

The press release notes, "The service is intended for those who have been displaced from their homes or are seeking refuge from the wildfires." Clearly, there's no enforcement of this, and I imagine there will be a big uptake from displaced workers, too, whether evacuated from their homes or not. There will be millions of people who have lost part or all their livelihood through this disaster.

AT&T kicks in 600 locations, too.

Posted by Glenn Fleishman at 3:43 PM | Permanent Link | Categories: Hot Spot

Gizmodo points out that the "wireless" element of Iogear's Wireless USB hub and dongle doesn't wash: The wireless part is the missing USB or network cable, but you still have to use cables to connect your USB devices to the hub, which itself requires AC power. Gizmodo found problems in setting up the Iogear system, which runs $200, and thought performance was poor. Separate drivers were required for the dongle and the hub.

These criticisms are all pretty justified. Ultrawideband (UWB) networking in the form of Wireless USB can't shine until UWB radios and drivers are installed at the factory on laptops and peripherals. UWB chip costs are already dropping, and it's likely that $500-and-higher electronics, like cameras and camcorders, will sport UWB by next year, as will computers. When you can associate a UWB device directly with your computer, no hub required, its utility will be much higher.

I've been waiting for that day to come for, oh, five years now. Maybe in 2008! (A technology reporter has to have constant optimism mixed with a jaded outlook.)

Posted by Glenn Fleishman at 12:51 PM | Permanent Link | Categories: UWB

![]() From this week's MuniWireless conference in Santa Clara, Calif., three bits of news: The AP reports on the latest State of the Market Report from MuniWireless, which dropped the estimate for US spending on metro-scale wireless networks to $329m in 2007 from $460m in the 2006 report. It's still a hefty 35 percent rise above 2006 levels, and MuniWireless's Esme Vos noted elsewhere that the survey was done in the height of the muni-Fi crash in August.

From this week's MuniWireless conference in Santa Clara, Calif., three bits of news: The AP reports on the latest State of the Market Report from MuniWireless, which dropped the estimate for US spending on metro-scale wireless networks to $329m in 2007 from $460m in the 2006 report. It's still a hefty 35 percent rise above 2006 levels, and MuniWireless's Esme Vos noted elsewhere that the survey was done in the height of the muni-Fi crash in August.

Also from the conference, News.com blogs on AT&T's talk about their involvement with muni-Fi: Marguerite Reardon interviews AT&T's, take a breath, senior executive vice president of legislative and external affairs, let it out now, who says, "Cities have also evolved in how they think about citywide Wi-Fi. It's a very different scenario when a city is looking to partner with the private sector than if they go out and use taxpayer money and issue bonds to build a network that will compete with services already offered by the private sector." Yeah, they evolved, you know, back in mid-2005. Where were you?

The blog entry notes that AT&T withdrew from Springfield, Ill., and were turned down by Chicago (Reardon says "dropped out," but I'm not sure that's the right nuance as I thought both EarthLink and AT&T made offers the city didn't like); their likely exit from St. Louis, Mo., isn't mentioned.

Finally, Wireless Silicon Valley puts on a brave face, but did any of us know that Azulstar was the lead in raising money? I kind of thought that Cisco and IBM's involvement, as in Sacramento, meant that they were taking on some of the financing to assure the network would be built. The Sacramento partnership is facing the same travail. Could it be that Cisco and IBM here and Cisco, IBM, and Intel in Sacramento just signed on to sell and finance gear? It's happened before, but it's rather disappointing when blue-chip firms allow their names to be used on projects that are core enough for them to ensure they happen.

Posted by Glenn Fleishman at 11:31 AM | Permanent Link | Categories: Metro-Scale Networks, Municipal | 1 Comment

Haw haw: Verizon Wireless agrees that advertising what I have called its "unmetered" cell data plans as "unlimited" is not the right term, and will change it due to action by the New York State Attorney General's office. The company will refund $1m to customers and pay $150,000 in penalties and costs to the state. They didn't admit any wrongdoing. The investigation found that 13,000 people nationally had their accounts canceled between 2004 and April 2007 for excessive use. In April, Verizon agreed to stop canceling accounts, and allow "common Internet uses."

I've been writing about this issue for years and years. Read this BoingBoing post in which I chimed in back Nov. 2005, for one instance. A service advertised as unlimited, but which is actually limited, is not unlimited. NY AG Andrew Cuomo said in the press release, "When consumers are promised an ‘unlimited’ service, they do not expect the promise to be broken by hidden limitations."

Their revised terms of service spell more out about what a reasonable limit is, including actual numbers, as well as providing examples of what they allow and don't allow. The word unlimited has disappeared.

But they do the math wrong, as I've noted before. They note, "A person engaged in prohibited uses continuously for one hour could typically use 100 to 200 MB, or, if engaged in prohibited uses for 10 hours a day, 7 days a week, could use more than 5 GB in a month." No. That's one hour a day, seven days a week--not 10 hours a day--to reach 5 GB. 10 hours a day would hit 50 GB. Technically, "more than 5 GB" is accurate, but it's about as accurate "unlimited" was in the past.

Update, 05-Nov-07: Verizon updated its TOS again to note that if you exceed 5 GB per month, they could throttle you to 200 Kbps. They could still cancel your account but at least they're spelling out penalties, and providing the potential of continuous service even if they have a bone to pick with you.

Getting closer to actually serving the customer. They better watch out: they might actually do something in our best interests.

Posted by Glenn Fleishman at 10:54 PM | Permanent Link | Categories: Cellular, Cluelessness, Legal

The Linksys WRT600N sets out to offer what no router to date can (updates added 31 Oct 2007): certified Draft N networking simultaneously in the 2.4 GHz and 5 GHz bands: This is a neat feat, but requires two radios to carry out, and carries with it a hefty $280 price tag. In testing, I found a number of design choices and missing features, along with a lapse in the standards certification process, that make me suggest that users wait for firmware updates before purchasing an otherwise quite capable router.

The WRT600N's closest competitor is the Buffalo Wireless-N Nfiniti Dual Band with gigabit Ethernet released in March for $250; it's not yet Draft N certified by the Wi-Fi Alliance, hence the Linksys unit's current uniqueness. (Read SmallNetBuilder's review.) Update, 31 Oct 2007: Buffalo is currently enjoined from importing its 802.11a, b, and n gear into the U.S.

The idea of offering both bands in a single router is simplicity: You can throw away old gear while preserving backwards compatibility and not sacrificing range nor throughput. Devices that can use the 5 GHz band and that you want to have running at full throttle can do so, particularly important for video streaming. This also allows you to reduce clutter, and to migrate older equipment to newer adapters as (or if) they're available instead of all at once.

The idea of offering both bands in a single router is simplicity: You can throw away old gear while preserving backwards compatibility and not sacrificing range nor throughput. Devices that can use the 5 GHz band and that you want to have running at full throttle can do so, particularly important for video streaming. This also allows you to reduce clutter, and to migrate older equipment to newer adapters as (or if) they're available instead of all at once.

The Ultra RangePlus Dual-Band Wireless-N Gigabit Router (WRT600N)--to use its unwieldy full name--uses Linksys's EasyLink Advisor, a piece of wizard software the company has been rolling out gradually across all its models. (It can be downloaded and used with certain older devices, too.) While the EasyLink Advisor does streamline setting up a new router, troubleshooting problems, and passing out a wireless security key, it was also maddeningly slow in "discovering" the router it was trying to reach, to which it was directly connected via Ethernet on a modern, fast Dell laptop running Vista. I found it unable to configure an internal Intel Wi-Fi adapter to connect to the network, despite its assurance that it could do so.

The router also offers the usual ancient Linksys interface behind the scenes via a Web browser for advanced configuration. I found for my basic purposes, the advisor didn't offer enough, and I expect that any user who finds the need for a dual-band, simultaneous 2.4/5 GHz router will also find the advisor inadequate because of specific settings they'll want to make "by hand."

The WRT600N has four 10/100/1000 Mbps Ethernet ports. The case is a sleek black, with LEDs on the front that display the status of ports, the Internet connection, and Wi-Fi security (enabled or not). It has a single USB port to accept a hard drive or flash drive.

The WRT600N succeeded in its primary goal in life: Providing access to two separate networks while shunting data over gigabit Ethernet to directly connected devices. The 2.4 and 5 GHz networks can be set with unique names and have separate security options.

In testing for throughput, I found that the WRT600N was highly inconsistent, but that's clearly due to my particular RF environment. Although my office is a mixed retail/office/residential building, and the neighborhood isn't that dense, we have some problem on channel 1 in 2.4 GHz that renders networks in that range completely unusable, regardless of vendor. A spectrum analyzer hasn't disclosed the cause.

In 5 GHz, I see some strangeness at times, too. As a result, I can't benchmark devices with confidence when I see low or erratic numbers that don't hold up to repeated tests. Conversely, when I see consistent high performance in such a difficult environment, I can rely on the robustness of the device.

In general, I was able to see speeds across 2.4 GHz and 5 GHz that should be expected from Draft N devices, but I'm declining to note them due to my test environment. Tim Higgins of SmallNetBuilder will have extensive, controlled test results in a few days which I'll link to. Update: These benchmarks are now available in Higgins's extensive review. I see that the number I saw aren't that far off from his more controlled tests.

Now on to some specific concerns.

Security. If you use the EasyLink Advisor, as Linksys expects many of its users will, you cannot choose your security key; the advisor creates a strong alphabetic-only key for you. That's great, but if you don't want to use the methods available for distributing that key to other computers, you have to type in a rather long set of characters.

Security. If you use the EasyLink Advisor, as Linksys expects many of its users will, you cannot choose your security key; the advisor creates a strong alphabetic-only key for you. That's great, but if you don't want to use the methods available for distributing that key to other computers, you have to type in a rather long set of characters.

The advisor can create an installation program with the key embedded that can be copied by the advisor onto a USB drive or transferred over a local network by connecting an Ethernet cable to a LAN Ethernet port. I tested the USB drive method, which exported fine but failed to install on a Windows XP SP2 system without enough explanation to troubleshoot the problem. (That system had the Linksys WPC600N dual-band card installed--the $100 one-band-at-a-time counterpart to the router--and all drivers were up to date.)

I would have liked to use Wi-Fi Protected Setup (WPS), as I now have a few computers that should be able to work with it. Unfortunately, in what appears to be a late decision in the release cycle, the WRT600N lacks WPS. There's a button on the top of the router that has a plastic laminated sticker around it labeled Reserved. I confirmed with Linksys that this release lacks WPS. It's a shame.

I wound up using the advanced configuration to set my own WPA2 passphrase--which can be set uniquely for each radio--to make it easier to add computers. That worked perfectly.

DHCP assistance. I like the DHCP Reservation system, which may not be unique to this model, but it's new to me. DHCP reservation lets you pick an IP address that remains persistent for a given device based on its MAC address, or the name that's set for the machine, and broadcast as part of the DHCP request. (On Macs, there's an option called DHCP ID, which is set separately, and can't be used for Windows machines.)

DHCP assistance. I like the DHCP Reservation system, which may not be unique to this model, but it's new to me. DHCP reservation lets you pick an IP address that remains persistent for a given device based on its MAC address, or the name that's set for the machine, and broadcast as part of the DHCP request. (On Macs, there's an option called DHCP ID, which is set separately, and can't be used for Windows machines.)

If you're trying to find the list of attached clients over DHCP, you use the DHCP Reservation button in the main Setup screen. It shows the client, and how it's connected: via the wired LAN or a wireless connection, where it shows which band it's connected via.

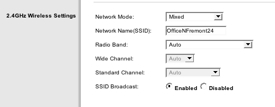

Picking SSIDs. Linksys made a strange decision in having their EasyLink Advisor set the same network name or SSID for both the 2.4 and 5 GHz networks. For adapters that support both bands, there's no simple way to mark which network you'd prefer to join. I found in testing that Linksys's own WPC600N dual-band PC Card joined the 2.4 GHz network, and I didn't see a way to change that behavior. I don't know of any tools in Windows or Mac OS X that let you preferentially set the band for networks that roam across bands, not just routers.

The advisor also won't let you choose an SSID which contains spaces, which is baffling. Linksys told me that this is due to legacy support issues as the advisor can handle older routers that cannot accept spaces in SSIDs. Sad that the advisor isn't smart enough to know which routers can and cannot, since spaces improve the legibility of a network name.

Again, I went into the advanced setup and entered separate names for my two networks. That worked perfectly.

Wide channels by default in 2.4 GHz. Linksys has chosen to release a router that's configured in opposition to the Wi-Fi Alliance certification standards; it's a loophole. Linksys ships the WRT600N set to use 40 MHz channels in 2.4 GHz by default, which means that when you turn the device on, you will interfere with double the number of networks that you would otherwise. The Wi-Fi Alliance Draft N standards say that the default should be 20 MHz, with manufacturers able to decide whether users can optionally enable wide channels. However, the alliance doesn't test for this (or even have a checkbox, apparently); Tim Higgins wrote about this last week. Update: Higgins writes on 31 Oct 2007 in his review of the WRT600N that he cannot observe the gateway dropping into 20 MHz channels as it should.

Wide channels by default in 2.4 GHz. Linksys has chosen to release a router that's configured in opposition to the Wi-Fi Alliance certification standards; it's a loophole. Linksys ships the WRT600N set to use 40 MHz channels in 2.4 GHz by default, which means that when you turn the device on, you will interfere with double the number of networks that you would otherwise. The Wi-Fi Alliance Draft N standards say that the default should be 20 MHz, with manufacturers able to decide whether users can optionally enable wide channels. However, the alliance doesn't test for this (or even have a checkbox, apparently); Tim Higgins wrote about this last week. Update: Higgins writes on 31 Oct 2007 in his review of the WRT600N that he cannot observe the gateway dropping into 20 MHz channels as it should.

If the WRT600N would follow the standard procedure of not transmitting using a 40 MHz channel when there's traffic present that it would step on, among other characteristics, that would mitigate this problem. It would essentially moot the issue, as despite being set to 40 MHz, the router would never use a wide channel if the wrong conditions were in place.

There are three mechanisms in the Draft 2.0 version of 802.11n that are supposed to prevent Draft N devices in 2.4 GHz from sending data over wide channels in those routers that will support 2.4 GHz wide channels. I can't determine whether Linksys has implemented these mechanisms. It remains to be seen whether Linksys is acting as a good neighbor or an indifferent one.

In the best outlook if the WRT600N doesn't back off to 20 MHz before transmitting, adding a WRT600N is like adding two 2.4 GHz Wi-Fi networks, which happens all the time. In the worst outlook, the default Linksys choice degrades the shared commons far more than it needs to, thus making everyone's experience in your airspace somewhat worse.

Inadequate 5 GHz channel selection, but commensurate with everyone else. Linksys, like other manufacturers, supports just eight of the 23 possible 20 MHz channels in 5 GHz: the four lowest and the four highest, known as UNII-1 and UNII-3. The middle 15, UNII-2 and UNII-2 extended, aren't available. Apple made the same call.

A Linksys spokesperson explained that because the middle 15 channels require additional FCC certification, a backlog in that testing has prevented the inclusion of these channels. Devices that use UNII-2 and UNII-2 extended frequencies must detect and avoid radar that's in use on some of those bands around the U.S. as part of the compromise that opened more spectrum up in 5 GHz. Apple hinted to me in August that something was afoot, too. Some changes went into effect in July for legacy channels (UNII-2) that seems to have slowed certification down.

The Linksys router selects the optimum 5 GHz channel out of the four 40 MHz or eight 20 MHz it supports by default. But if you change the router's configuration from Auto to choose a specific channel, you are limited to just the lowest four (UNII-1). This is a bit baffling.

Conclusion. A number of choices have led to this router being harder to set up for a user that might demand two bands than it should have been. The lack of WPS makes it more difficult to secure for average users. And Linksys's specific choice to release a loopholed router under Draft N certification rules is simply baffling.

With firmware upgrades, I expect this router will shine. But I'll have to wait to see such upgrades before I could warmly recommend it.

Posted by Glenn Fleishman at 4:13 PM | Permanent Link | Categories: Hardware | 2 Comments

Ruckus's CEO interviewed in Forbes: Ruckus's Selina Lo is what we call a lively interview subject. This means, "she swears." Not excessively, but with great vigor when called for. She's a hoot to interview because Lo speaks her mind--and has backed up statements with products and actions that confirm what she's said time and again. This Forbes interview captures her company's positioning quite well.

Ruckus's CEO interviewed in Forbes: Ruckus's Selina Lo is what we call a lively interview subject. This means, "she swears." Not excessively, but with great vigor when called for. She's a hoot to interview because Lo speaks her mind--and has backed up statements with products and actions that confirm what she's said time and again. This Forbes interview captures her company's positioning quite well.

Posted by Glenn Fleishman at 11:17 AM | Permanent Link | Categories: Wee-Fi

![]() So Cisco and Intel aren't pulling dollars out of their pocket, huh? This is fascinating. I assumed that the blue-chip reputations and billion-dollar spare-change purses of Cisco and IBM might prevent Sacramento's Wi-Fi network from having the startup problems faced by other service providers. Uh huh. The Sacramento Metro Connect consortium isn't self-funding, which I'm surprised by. They're "closing in on the nearly $1 million" they need to get going, which has delayed the network's start.

So Cisco and Intel aren't pulling dollars out of their pocket, huh? This is fascinating. I assumed that the blue-chip reputations and billion-dollar spare-change purses of Cisco and IBM might prevent Sacramento's Wi-Fi network from having the startup problems faced by other service providers. Uh huh. The Sacramento Metro Connect consortium isn't self-funding, which I'm surprised by. They're "closing in on the nearly $1 million" they need to get going, which has delayed the network's start.

This article misidentifies consortium members, as far as I understand it. The Metro Connect partnership for Wireless Silicon Valley, which has had its last rites read even if it's not quite dead yet, includes IBM instead of Intel. This group in Sacramento is the reverse: no IBM. Or at least as far as I've known since the bid was accepted. The other two members are Azulstar and Seakay. Update: Reader George confirmed with the Sacramento Bee's writer that IBM was part of this group. I checked back to June, when the deal was announced, and all the sources then left IBM out; see this Wi-Fi Planet article and this Unstrung reproduction of a press release, for instance. IBM must have been added later, or omitted during initial phases?

Azulstar just faced a major setback by having the plug pulled--or lack of plug?--in Rio Rancho, N.M., where after years of work they couldn't get a network running that met the city's requirements.

This is the second attempt for a Sacramento network after a previous round in which the winning bidder withdrew during negotiations when the city asked for too much free service.

Posted by Glenn Fleishman at 7:54 AM | Permanent Link | Categories: Metro-Scale Networks, Municipal

![]() The folks at Burbank Water and Power are planning a Wi-Fi network that won't resemble any city-wide network built to date: Fred Fletcher, the assistant general manager for the utility, says that their primary concerns are conserving power in order to achieve long-term goals of shifting electrical generation to sources that produce fewer greenhouse gases. They believe Wi-Fi is the ideal mechanism to achieve that goal. They're talking publicly about their plan for the first time today at the MuniWireless conference today, a summit aptly titled "Industry at a Crossroads." (Here's their press release.)

The folks at Burbank Water and Power are planning a Wi-Fi network that won't resemble any city-wide network built to date: Fred Fletcher, the assistant general manager for the utility, says that their primary concerns are conserving power in order to achieve long-term goals of shifting electrical generation to sources that produce fewer greenhouse gases. They believe Wi-Fi is the ideal mechanism to achieve that goal. They're talking publicly about their plan for the first time today at the MuniWireless conference today, a summit aptly titled "Industry at a Crossroads." (Here's their press release.)

The utility, working with smart utility meter firm SmartSynch, isn't looking just into automated meter reading, in which an employee would drive around and use Wi-Fi instead of eyeballs to pull up the current readers. Nor are they looking to replace the driving around part, too, with a city-wide network that could constantly read the meters.

Rather, that's just part of a set of larger plans to allow management of load through participation of their customers, as well as potentially give those customers free Wi-Fi access as an incentive for meeting conservation goals. Customers will save money, too, by shedding load at critical times.

The Wi-Fi network will be planned as a metering and residential service. Fletcher said the utility will install as many Wi-Fi nodes as needed to provide a good signal to the meters, and, by extension, to users within those locations. This means that outdoor coverage could be irregular, but it's not a focus. It also means that it's much more likely that there will be a high availability of indoor service compared to other networks that have to provide a generalized cloud and ensure indoor and outdoor signals.

Henry Jones, the chief technical officer of SmartSync, said that the goal was demand response, defined as "getting the demand on its own, through incentives, or, in some cases, through direct load control that's initiated by the utility to change when that demand takes place." This includes having smart thermostats that would be in people's homes and in businesses and integrate with the meters. A spike in demand could allow the utility to change the air-conditioning temperature based on a customer's own preference: if you could stand your home or office hotter, the utility can take advantage of your flexibility. Customers would not only save the cost of the electricity they didn't use, but could potentially get a rebate based on the money the utility didn't spend during the demand period. (Power bought on the spot market can be crazily expensive compared to power routinely produced or under regular contract.)

Smart thermostats are in some use and have been talked about for years, but linking them into a live network has been the problem. I asked Fletcher why the utility didn't opt for broadband over powerline (BPL), which always seems to be next year's breakout technology. The reason was simple: BPL follows the same path as power, and a power outage would prevent them from using the network to control substation switching. Wi-Fi, which could be powered through back-up batteries, could continue to function and reach otherwise cut-off shunts and telemetry.

The metering Burbank will put in place will let them first assess how power is being used, and then use that to create a plan for how to best even out demand. This could include huge incentives to some customers to replace ancient air conditions, as some utilities do now to promote efficiency, but also offering free Wi-Fi as a carrot.

The remote metering will allow people to log in at any time to check their usage, and will enable pay-as-you-go billing, something that can help lower-income people who are typically faced with post-facto bills, and who then get behind and have their power disconnected, which adds additional charges. At minimum, a low-speed Wi-Fi network connection will let customers without other Internet access connect to pay their bill or view usage, too. (The meters will allow remote disconnects, too, rather than requiring a technician to visit the home, reducing cost.)

The utility gets to leverage its existing field workers, mapping data (which includes trees in the rights of way and building outlines), fiber-optic network, and utility pole and other rights of way ownership. Their meter readers will fan out with GPS devices, and gather the location of every meter in the city over a few month period, and that data will be directly integrated into their existing GIS system.

They also have the advantage of having giant movie and television studios in their town that use a lot of juice: The top few companies use 25 percent of the electricity; the top 200, 50 percent of the town's juice.

The rollout is planned in four phases, which increase in cost. Phase 1 was a $50,000 technology assessment that's finished. Phase 2 involves spending $50,000 on a pilot test on the utility's 20-acre campus. They then move to phase 3, in which they'll spend $1m, and capture a good portion of the power usage. Because big customers are involved, the utility estimates 80 to 100 Wi-Fi nodes will cover just 80 to 100 meters. Finally, stage 4 will cost about $5m and cover most of the 18 sq mi town.

Burbank, as I noted above, has a fiber-optic network already spread throughout the town. They use OC3 SONET now, an older 155.5 Mbps standard, and will retain that while overlaying 10 gigabit per second Ethernet with equipment from Cisco. This will allow them to feed all their Wi-Fi nodes or mesh clusters rather easily, but also gives them an additional line of revenue for their Internet service operations: studios will likely buy access for transport. Burbank will also invest in a high-definition transport intertie with firms that specialize in moving television data around over long distances.

This idea in Burbank is rather powerful because it's not just another municipal Wi-Fi network. Rather, there are specific applications already justified for long-term cost savings that will be put in place. Wi-Fi access will be an extra, and while a component, not the critical one. Not every utility could make this work: Having fiber in place and a well-understood future goal and squeeze on power generation make a perfect storm in which Wi-Fi fits in this Southern California town.

Posted by Glenn Fleishman at 5:00 AM | Permanent Link | Categories: Municipal, Power Line

DS2 announces 400 Mbps electrical standard offering: It can carry 3 HDTV and 2 SDTV streams at the same time. Which is depressing that any household needs five simultaneous TV streams to be happy. Powerline networking for the home has multiple proprietary standards and one industry-supported effort called HomePlug. There's been no coalescing or consolidation, unfortunately. A fair amount of powerline and similar home networking technologies are used for distributing IPTV, rather than used solely for home purposes; that means a service provider tends to provide the equipment, and interoperability isn't an issue.

Posted by Glenn Fleishman at 8:56 AM | Permanent Link | Categories: Power Line

The BCC reports that British Telecom uses WEP on its home networks: I'm not sure whether BT enables WEP by default on their home Wi-Fi routers, or if you can't upgrade to use WPA or WPA2 at all. However, this makes the WEP crack that will be discussed by AirTight researchers at ToorCon this weekend all the worse. I tend to think of WEP as so 20th century--something used only in despair. In fact, this BBC article makes clear that WEP is still in wide circulation because of compatibility issues.

The BCC reports that British Telecom uses WEP on its home networks: I'm not sure whether BT enables WEP by default on their home Wi-Fi routers, or if you can't upgrade to use WPA or WPA2 at all. However, this makes the WEP crack that will be discussed by AirTight researchers at ToorCon this weekend all the worse. I tend to think of WEP as so 20th century--something used only in despair. In fact, this BBC article makes clear that WEP is still in wide circulation because of compatibility issues.

I think of Windows XP as the default operating system for Windows users; there are still plenty of pre-XP home users out there, too. (WPA can be used on some pre-XP systems, depending on drivers and other factors.) The other day, a colleague received email from a graphic designer who uses Mac OS 8.6 and is still happy with it, but is considering updating. That's a nearly decade-old system release. I suppose I'm too sanguine about WEP's availability.

Posted by Glenn Fleishman at 8:14 AM | Permanent Link | Categories: Security | 2 Comments

The British spectrum regulator has set preliminary rules for in-flight mobile device use (voice and data) with picocells onboard, but sets 2008 as first deployment: Ofcom says that mobile devices may be used at an airline's discretion at altitudes of 3,000m (10,000 ft) or higher. On-board picocells would be required. The initial process will include only 2G services, which has been expected all along, so GSM voice calls and GPRS data using 1800 MHz only. 3G would come later, if 2G tests out fine, as it uses other frequency ranges. Ofcom is looking for feedback by Nov. 30.

The British spectrum regulator has set preliminary rules for in-flight mobile device use (voice and data) with picocells onboard, but sets 2008 as first deployment: Ofcom says that mobile devices may be used at an airline's discretion at altitudes of 3,000m (10,000 ft) or higher. On-board picocells would be required. The initial process will include only 2G services, which has been expected all along, so GSM voice calls and GPRS data using 1800 MHz only. 3G would come later, if 2G tests out fine, as it uses other frequency ranges. Ofcom is looking for feedback by Nov. 30.

Although Ofcom represents just the UK, they are working with other EU member states to create a regime that would common across the entire set of territories. Such a decision is expected by late 2007 or early 2008, Ofcom notes in the executive summary of its request for comments.

Posted by Glenn Fleishman at 11:42 AM | Permanent Link | Categories: Air Travel, Regulation

InfoWorld has a write-up on an upcoming Toorcon presentation by Vivek Ramachandran and Md Sohail Ahmad: The AirTight Networks researchers have developed an attack they call Caffe Latte; it uses a laptop's attempts to connect to WEP-protected networks as the jimmy that lets the cracker into a position to force the laptop to issue tens of thousands of WEP-encrypted ARP requests, which are used to crack the network key. Caffe Latte lets the attacker then act as a man in the middle, providing Internet access from another network while examining the victim's computer or installing payloads. This attack can be used anywhere: while whiling away your time at a cafe, you could be cracked, hence the fancy name.

InfoWorld has a write-up on an upcoming Toorcon presentation by Vivek Ramachandran and Md Sohail Ahmad: The AirTight Networks researchers have developed an attack they call Caffe Latte; it uses a laptop's attempts to connect to WEP-protected networks as the jimmy that lets the cracker into a position to force the laptop to issue tens of thousands of WEP-encrypted ARP requests, which are used to crack the network key. Caffe Latte lets the attacker then act as a man in the middle, providing Internet access from another network while examining the victim's computer or installing payloads. This attack can be used anywhere: while whiling away your time at a cafe, you could be cracked, hence the fancy name.

Update: Astute readers noted that this specific attack first appeared on Darknet.org.uk as Wep0ff in January 2007. I'm not sure from the InfoWorld article whether there are any differences between that tool and the Toorcon presentation. Another update: See comments; Ramachandran says their attack is different, and the full details will be revealed at the conference.

The application of this attack is interesting, because although the article and Ramachandran/Ahmad's Toorcon description talk about business use of WEP, actual WEP use by corporations is pretty limited. Most companies of any scale are using some form of 802.1X or other credential-based logins which can't be subverted by this attack. Companies in retail and logistics are apparently the most vulnerable, because early Wi-Fi built into retail point-of-sale systems and scanners used in warehouses are still in wide use, and can only support WEP. If a cracker can associate the cracked key with a company by scanning the victim's hard drive or using other intrusion tools, then they can go to that company and enter their network at will, too. That's what led to the TJ Maxx/Marshall's parent company break in.

The broader implications are that if you ever attached to a WEP-protected network and stored the key, your laptop is now vulnerable to this attack. This may lead people to turn their Wi-Fi radio off when not actively attached to a network when out in public. (It's a good idea for reducing battery drain, too, of course.) The researchers are using an older form of WEP attack, it seems like, as they suggest it could take up to 30 minutes to break the WEP key in this manner; other researchers revealed a method that works in as little as under two minutes back in April.

The vulnerabilities exposed by this attack arise because the IP ranges associated with Wi-Fi networks are often considered trusted networks by firewall software. Most firewall software requires that you agree or disagree that a particular network range represented by a Wi-Fi network that you connect to is trusted or untrusted. I suspect most users add the network to their trusted category when they connect to a network, assuming it to be safe--maybe the case when it's a home network. Which means that popular private addressing ranges starting with 10.0 or 192.168 are already approved in your firewall. With the attacker managing to appear to your computer like a WEP network it's already joined, they may not be blocked from probing for the many weaknesses typically found on most Windows computers through outdated software and drivers.

Posted by Glenn Fleishman at 4:08 PM | Permanent Link | Categories: Security | 2 Comments

Nokia announced the N810, the latest Wi-Fi-equipped tablet PC: The tablet will ship in November in the US for $479. The Linux-based device allows third-party software, including now Wi-Fi hotspot management and hotspot network software from Devicescape and Boingo announced in the last week, but mysteriously (until now) not available on tablet systems until November. The tablet can be connected over Bluetooth to a cell phone for cell data connectivity. The N810 includes Skype, a camera, and a GPS with maps.

Nokia announced the N810, the latest Wi-Fi-equipped tablet PC: The tablet will ship in November in the US for $479. The Linux-based device allows third-party software, including now Wi-Fi hotspot management and hotspot network software from Devicescape and Boingo announced in the last week, but mysteriously (until now) not available on tablet systems until November. The tablet can be connected over Bluetooth to a cell phone for cell data connectivity. The N810 includes Skype, a camera, and a GPS with maps.

Colorado Springs, Colo., buys downtown Wi-Fi network for $10: The city purchased about 40 nodes from SkyTel Corp. which had decided its trial of a Wi-Fi network didn't attract enough paying users. It was apparently cheaper to sell the equipment than to unmount it. The city assumes a utility pole lease, too. This article says that Colorado cities can't resell public access, so the city will use it to test public safety and municipal applications.

Posted by Glenn Fleishman at 2:16 PM | Permanent Link | Categories: Wee-Fi

Boingo announced that their compact Boingo Mobile client is now available for Nokia phones, tablets: The software, available now for E60 phones and handhelds like the N95 and in November for the N800 tablet, connects the devices to Boingo's worldwide network of tens of thousands of hotspots for $7.95 per month. The price includes Wi-Fi voice calls and Wi-Fi data used on these hotspots. As with the Devicescape deal announced last week, this doesn't put the software on the phones, but makes it simple for the Boingo-developed software to be found and installed on supported Nokia devices. (Boingo's laptop-enabled network has 100,000 hotspot; the mobile-enabled locations and pricing model requires more work and negotiation with operators as Boingo continues to build the mobile footprint.)

Christian Gunning, Boingo's head of marketing, said that while Nokia's S60 and N Series phones weren't well known in the U.S., that they were "widely available and widely successful" elsewhere. Subscribers typically pay the full cost for phones in Europe, and users aren't limited as to what they install on them. That means that VoIP over Wi-Fi isn't an unusual application, and Boingo can enable that by providing the network at a predictable monthly cost. The deal also allows Nokia's multimedia phones and tablets to access streaming and downloadable media over Wi-Fi at a much lower cost than with comparable laptop-oriented Wi-Fi deals, including Boingo's own U.S. and international unlimited plans ($22 and $39, respectively).

While Boingo put part of its software suite into open source licensing to allow their service to be ported to platforms that they wouldn't themselves have spent the time to develop under, the Nokia deal was in cooperation with the Finnish handset giant. In contrast, Belkin developed their Boingo add-on for the Skype phone they released last year on their own, and contracted with Boingo to allow retail Boingo customers to gain access to the aggregated network. (Manufacturers can choose to contract for users and keep part of the fees, but that requires minimum monthly fees paid to Boingo.)

I took the opportunity to talk to Gunning about the company's current strategy. Founded in 2001, they've been pursuing their aggregated network approach for nearly six years; I first wrote about them in Dec. 2001.

Gunning said that to ensure quality on their increasingly large and non-U.S. aggregated network, they contracted with a third party, Lionbridge to handle not just localization--customizing their applications and Web sites for other languages and countries--but also worldwide testing. "We contract with them literally to jump on planes and go from country to country to country with laptops and handheld devices and do quality testing on major networks around the world," Gunning said.

Boingo tried to handle this in house and on an ad hoc basis, but they couldn't achieve the level of quality from the platform partners, Gunning said. As I have explained to the many people over the years who have asked me how Boingo made money selling subscriptions at retail, Boingo is the private-label backend or hotspot component for products sold by Fiberlink, Verizon Business, and most recently, Alltel.

Through this testing, Gunning said that Boingo was able to help its hotspot partners to achieve better results, but also that they were able to remove a handful of locations from their directories that they couldn't guarantee consistent performance on. "We can monitor if there's a specific SSID or a specific venue ID that's consistently failing," said Gunning.

Because Boingo has been in a position to work with networks worldwide to improve their consistency, I asked Gunning where 802.1X--a standard network authentication method widely supported for enterprises--stood in terms of hotspots. "802.1X in hotspots would be a phenomenal boom for the end user in terms of security and safety," he said, but besides a handful of networks like KT in South Korea (which requires it), and T-Mobile (not a Boingo network partner outside its airports), and iBahn, there's not much uptake.

What Gunning did note, however, is that the Wireless Internet Service Provider (wISPr) standard developed at the Wi-Fi Alliance (and seemingly not available on their site) has proved an effective set of guidelines for hotspot operators in providing at least a basic level of compatibility. Boingo had to build dozens of authentication scripts in their early days, but now can use one of about five scripts that work with popular interpretations of the wISPr guidelines. (It's not a certified, tested standard, but a set of basic recommendations.)

On the financial front, Boingo remains privately held and close lipped. Gunning noted that Deloitte & Touche had recently given noted that Boingo was the No. 2 company in the Los Angeles area for growth over a five-year period. This gives us a glimpse into their revenue, as firms must have had over $50,000 in yearly revenue in 2002, and over $5m in annual revenue in 2006. The accounting firm privately checks out a firm's books to determine revenues and other factors.

Boingo was reported as having a 13,398-percent growth over that period, which means that assuming at least $50,000 in 2002, they have nearly $7m in revenue by 2007. It's more likely that they had over $50,000 revenue in 2002, and thus are probably above the $20m range now, including Concourse Communications revenue. That would represent something like a few tens of thousands of regular subscriptions and some hundreds of thousands of one-off purchases at airports. While Gunning wouldn't confirm a precise amount, he stated that it is well above that mark. Gunning also said that "we are cash flow positive; we have a sustainable business."

I noted Concourse just a moment ago, a 2006 acquisition by Boingo of an airport Wi-Fi provider and cellular network equipment operator. Concourse has agreements for service for a large percentage of the major airports in the U.S., including Detroit, Minneapolis, O'Hare, and Midway in the midwest, Atlanta, Houston, Memphis, and Nasvhille in the south, and JFK, Newark, and LaGuardia in the northeast. (On the cellular side, cellular operators contract with a central network builder - sometimes a cell operator, sometimes a third party like Concourse - which in turn pays fees to the airport authority.)

Gunning said that the acquisition was a way for Boingo to expand its retail identity while also gaining chits that would allow it to expand its networks. He noted that some unique network deals that the company assembled required reciprocal roaming: Boingo could include these networks in their aggregated footprint by also extending access to those operators' subscribers when they roamed to Concourse-managed airports. This has given Boingo some exclusivity over competing hotspot network components offered by firms like iPass.

Related to that, I asked Gunning if the evil twin problem at O'Hare and elsewhere was true or overblown. In this scenario, travelers see a network name called "Free Wi-Fi" or a variant when they check on available Wi-Fi. Some security researchers have checked and seen that these are ad hoc networks. Gunning confirmed that there is a virus or viruses in the wild that are tailored to advertise an ad hoc network, and then transmit various payloads, such as spam zombie software or other keyloggers, to any vulnerable computer that connects. It also "turns your machine into a free Wi-Fi hotspot broadcasting ad hoc machine to spread the virus," further propagating itself.

It's been interesting to follow the model that Boingo founder (and EarthLink founder) Sky Dayton promulgated back in 2001, that the goal was to fill pipes, not to build networks. Dayton saw a distinct difference between the two tasks, an unpopular view at the time as companies were started that tried to pursue retail brands for their particular small number of hotspots. While T-Mobile and a few other companies do still have a strong retail Wi-Fi association, frequent business travelers have tended to move towards a subscription model with whatever the best and most well traveled set of hotspots they have can cover, whether AT&T WiFi, T-Mobile, Boingo, iPass, or others.

Gunning echoed Dayton's words when he spoke about how the company views itself: "We're all about being a big, fat dumb pipe to do whatever you want."

Posted by Glenn Fleishman at 9:05 AM | Permanent Link | Categories: Aggregators, Gadgets, Hot Spot

The BBC has partnered with The Cloud to make its online services available free at the network's 7,500 hotspots: BBC offers a variety of programming, including TV program (programme) downloads, through its Web site. A special Windows-only player will be supplemented with Mac OS X and Linux versions later this year, and Flash streaming will be offered, too. Downloaded programs can currently be kept on a computer for up to 30 days. At The Cloud locations, streaming and downloading will be available at no cost, but will require a laptop. They'll expand to portable devices like the Nokia N95 multimedia smartphone in the future. The BBC says they have 250,000 regular users of the iPlayer software.

Posted by Glenn Fleishman at 3:25 PM | Permanent Link | Categories: Hot Spot, Media, Streaming

Ah, how quickly they forget the first time around: AT&T Wireless once installed Wi-Fi at six Amtrak stations across the Northeast Corridor, but I don't believe it worked very well. The manager at Baltimore's Penn Station quoted in this Baltimore Sun article said they'd "experimented with a Wi-Fi connection." Hrmphf. In any case, AT&T Wireless became part of Cingular and now is just AT&T (wireless), and they've shed some of offering along the way.

Ah, how quickly they forget the first time around: AT&T Wireless once installed Wi-Fi at six Amtrak stations across the Northeast Corridor, but I don't believe it worked very well. The manager at Baltimore's Penn Station quoted in this Baltimore Sun article said they'd "experimented with a Wi-Fi connection." Hrmphf. In any case, AT&T Wireless became part of Cingular and now is just AT&T (wireless), and they've shed some of offering along the way.

The new Amtrak station Wi-Fi is run by T-Mobile, which doesn't forget that Wi-Fi is part of its business, and can also be found at the other Penn Station in New York City, 30th Street Station in Philadelphia, Wilmington Station (Delaware), and Union Station (D.C.).

The same station manager might be talking out of turn when he confirms a reporter's question about Wi-Fi on the trains. Amtrak has been rumored in the past to be considering it, but there are few trains in the U.S. that have any form of Internet access on board, and many projects have gone awry in recent months. I expect Amtrak would prefer that they make that sort of announcement directly. There's enough 3G along the Northeast corridor that it's likely a cell-based, somewhat continuous service could be offered with EVDO Rev. A backhauling it.

A typo in the article might reveal the heights to which Wi-Fi hype can climb: an expert consulted estimated "500,000 million" people use Wi-Fi worldwide. Adding the hyphen in makes that statement so much less interesting. But it's also less accurate. Tens of millions of people use Wi-Fi worldwide, not 500,000 to 1,000,000.

Posted by Glenn Fleishman at 3:22 PM | Permanent Link | Categories: Rails | 1 Comment

![]() Novarum has released a limited set of its first-half 2007 findings while testing metro-scale Wi-Fi and cell data networks: The rankings are fine, but I'm more interested in what they discovered while performing their tests. They discovered that high-powered Wi-Fi adapters really do make an appreciable difference in providing an improvement in coverage and performance--something that's not always been clear--but were surprised to find that regular-power 802.11n adapters have about 2/3rds of the high-powered radios' advantage in reception and throughput at much lower cost.

Novarum has released a limited set of its first-half 2007 findings while testing metro-scale Wi-Fi and cell data networks: The rankings are fine, but I'm more interested in what they discovered while performing their tests. They discovered that high-powered Wi-Fi adapters really do make an appreciable difference in providing an improvement in coverage and performance--something that's not always been clear--but were surprised to find that regular-power 802.11n adapters have about 2/3rds of the high-powered radios' advantage in reception and throughput at much lower cost.

Novarum's other key findings are that even more than 40 Wi-Fi nodes per square mile are needed for something that approaches 100-percent "service availability," a term they define as providing the ability to access the network and perform tasks, as opposed to just presence of a signal; and that 3G cell data networks continue to improve on performance - measures of bandwidth - even as they excel in service availability because of client hardware's ability to drop down to 2G service where 3G is unavailable, keeping a seamless connection.

Phil Belanger and Ken Biba, Novarum's founders, date back to the early days of Wi-Fi and wireless networking. They've been through the ringer in several firms, and started testing Wi-Fi networks in 2006 with the idea of selling reports to cities and companies on a spec basis--conducting the studies and then finding buyers for the data--and being hired to perform independent audits or competitive analysis. They decided to start making reports available for a la carte purchase (beginning today), along with continuing to sell subscriptions for their full feed and custom work.

Novarum's methodology involves taking a standard suite of clients that they can test from a set of 20 locations around a city, performing the same measures each time they re-test a city. They drive around, stop at specific locations chosen for particular purposes, and use a standard laptop 802.11g card, a newer but not fancy 802.11n adapter, a 300 mW laptop card with an external 5 dBi antenna mounted on the car from which they test, a Ruckus router, and various cell data modems, among other devices. They test Wi-Fi and cell data to provide apples-to-apples comparisons. Novarum uses four measures, weighting service availability and performance (itself a throughput number weighted towards downstream speeds) more heavily than ease of use and value. (They tested whether using more locations provided more accurate results, and decided it did not change the averages or standard deviation enough to matter.)

In an interview, Belanger said that their testing this year--some of which involved revisiting networks--showed that cellular carriers and metro-scale Wi-Fi networks were both improving, in many cases rather dramatically. (You can view a summary of their findings on their Web site.)

Belanger said that, notably, Philadelphia's EarthLink Feather network had improved dramatically since their last tests when it was just out of its pilot stage. "EarthLink is doing what the new CEO said; they're going to stay in the current footprint and make that work," Belanger said. Novarum measured a 50-percent increase in node density over last year's tests, and saw "much better service availability" in the covered area. Speeds of nearly 1 Mbps downstream aren't unusual. Novarum dubbed the Philadelphia network "most improved."

Mountain View, Calif.'s network, run by Google on a free basis, has also improved tremendously since their last visit in 2006. Belanger said that Google and its hardware provider Tropos were treating the town like a lab, constantly tweaking the network. A strange bit of interference in the 2.4 GHz band in Mountain View has required both companies to adapt. Node density is now at about 43 per square mile, but a combination of other tweaks and firmware upgrades boosted throughput: "The dramatic improvement was in performance," Belanger noted.

I asked about Portland, Ore., the only major city in MetroFi's deployment plans at the moment, and where it was recently revealed by the mayor's office and confirmed by the firm that without a change in the city's commitment or an infusion of venture capital, the network will grow only slighty beyond its current mostly-downtown footprint. Belanger said their testing found poor availability and lots of inconsistency in Portland's Wi-Fi service.

In their first test, they found service availability in Portland with a regular Wi-Fi client in just 30 percent of the locations they tested. (MetroFi publishes a full-disclosure coverage map, so there shouldn't be any ambiguity about live areas of the network.) "We were so disappointed with the results, that we've gone back and tested it again" in the third quarter of 2007, wondering if they had just hit a bad day on the network. But the results were consistent.

With a node density of roughly 30 per square in the areas tested in Portland, Novarum found service "very poor" with a regular client. They also found that the session-based login would time out even when they were actively using the network, requiring a new login. (Belanger noted that Novarum had bid as a service auditor for the city of Portland's evaluation of the network, which another firm won.)

Their single biggest conclusion from testing dozens of networks is that service availability remains metro-scale Wi-Fi's biggest weakness compared with cellular data networks. In the cities they've tested to date, cellular service availability was 100-percent across 15 municipalities, while only the St. Cloud, Flor., network among Wi-Fi services reached that 100-percent mark (requiring a high-powered adapter). On average, cell networks had 87-percent service availability; metro-scale Wi-Fi, 71 percent.

Sprint Nextel and Verizon's EVDO Rev. A upgrade also showed an appreciable improvement in speed in areas they tested before, running 20 to 30 percent faster, and making the difference between metro-scale Wi-Fi speeds, which tend to run below or well below 1 Mbps downstream and 3G cell data networks even less pronounced. The gap in price (Wi-Fi being from free to $20 per month; cell data, $60 to $80 per month) and the lower service availability of Wi-Fi in supposedly covered areas reamining the biggest differentiator. This should move coverage into a more important part of the service matrix as providers decide where to spend money.

In their last report, several months ago, Novarum said "40 was the new 20," meaning that 40 nodes per square mile appeared to be the density at which metro-scale Wi-Fi networks operated well and had wide availability, rather than the 20 nodes per square mile that equipment makers like Tropos once said would work. (Tropos no longer gives that number out.)

Belanger said that through continued testing, they're finding that slightly north of 40 is even better at achieving service availability, and that if a network has good uptake, additional nodes would have the added advantage of keeping throughput high. Belanger said that with the service availability in most cities they tested - remember that 71 percent was the average - "It's just we're not there at that threshold where it appears to be a solid service." He noted, "At about 85% is about the area where it's going to appear like a robust service, you'd actually pay for it."

Even at over 40 nodes per square mile, Belanger said a Wi-Fi network should weigh in at $100,000 to $150,000 per square mile, which he puts as much cheaper than equivalent technologies deployed in cities, especially if spectrum licenses are considered for alternatives, like mobile WiMax. (Costs may vary for both licenses and infrastructure when looking outside cities; one reader said that WiMax-like technology has very competitive costs in more rural environments were licenses are relatively cheap.)

This time around, 9 of the top 10 slots for best overall networks, combining their various measures, were occupied by metro-scale Wi-Fi networks, although most required the use of a high-powered 802.11g adapter to achieve those marks. Those adapters are built into Wi-Fi bridges provided by Ruckus and Peplink, and which service providers recommend.

In comparing availability and performance between a regular adapter and the high-powered one they tested, they found a 36-percent improvement in performance and 38-percent improvement in speed. But their big surprise was in testing a generic, inexpensive, regular-powered 802.11n adapter against a regular 802.11g Wi-Fi radio: performance jumped 20 percent and availability, 26 percent, with 802.11n.

Belanger noted that with performance that high from 802.11n and on an asymmetrical basis--the Wi-Fi networks are still pumping out old 802.11g--that it's a good sign for the future as people move to 802.11n. He said that equipment makers told him the improvement in speed is a bit of a mystery.

Even with "better radio chips, a better antenna, multiple antennas more cleverly used," that there's "probably also other things like the way it does associating and roaming from one AP to the other" that help. In any case, an 802.11n USB adapter for Mac OS X and Windows weighs in at about $60 to $70, while Peplink and Ruckus bridges run $100 to $400.

As they plan for the future, Belanger said that both mobile devices--specifically the iPhone--and mobile WiMax loom large. The company is now testing with an iPhone because the device has built-in seamless handoff between 2G EDGE and Wi-Fi networks. This lets the device have a much better overall service availability figure even when a Wi-Fi network is spotty.

Likewise, pre-WiMax technology like Clearwire and mobile WiMax technology appearing next year will figure into their testing. They've already scanned Chico, Calif., and Eugene, Ore., with Clearwire's current gear.

While there are other firms that audit and test wireless networks, Novarum appears to be the only company revealing as much information publicly; they may be the most extensive testers of Wi-Fi networks, but they're certainly the most frank.

Posted by Glenn Fleishman at 12:00 AM | Permanent Link | Categories: Metro-Scale Networks, Municipal | 5 Comments

![]() The Detroit News rounds up three large-scale projects in Michigan, each of which is facing its own challenges: The writer is rather kind when she writes, "St. Louis, Chicago and San Francisco recently scaled back projects because of low demand and problems with providers." (St. Louis is still because of utility pole electricity issues; Chicago rethinking; SF, up in the air.) Projects in Oakland and Washtenaw counties are still quite limited and well over a year behind schedule, but the providers for each say they've overcome difficulties.